Business Wire: Aptina and Sony have signed a patent cross-license agreement, which provides each company with access to the other’s patent portfolio. The agreement enables the two companies to operate freely and use each other's patented inventions to advance the pace of development for cameras and other imaging applications. The cooperation fostered by the cross-license reinforces the ability of both companies to provide compelling imaging solutions to their customers.

"Patents and innovation are a critical component of Aptina’s strategy, and Aptina’s patent portfolio is the largest and strongest in the image sensor industry," said Bob Gove, President and CTO of Aptina. "We believe that this powerful blend will advance technology to realize our goal of enabling consumers to capture beautiful images and visual information."

Thursday, February 28, 2013

Camera Phone Overviews

Recently, there were two quite different overviews of camera phone industry published. The first one is published by AnandTech "Understanding Camera Optics & Smartphone Camera Trends" by Brian Klug. It goes briefly through various technical challenges in camera phone design.

The second review comes from Samsung Securities and talks about 13MP camera phones supply chain, quite a complex multi-source one:

The second review comes from Samsung Securities and talks about 13MP camera phones supply chain, quite a complex multi-source one:

Wednesday, February 27, 2013

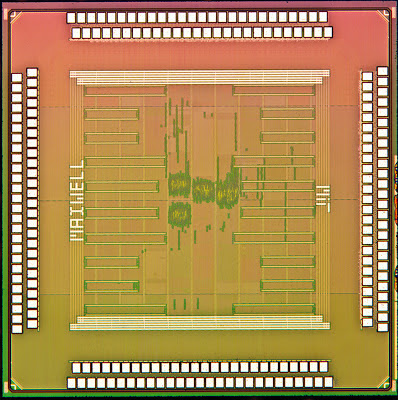

MIT Presents Computational Photography Processor

MIT’s Microsystems Technology Laboratory presents a processor optimized for computational photography tasks. In one example, the chip can build a 10MP HDR picture combined out of 3 frames "in a few hundred milliseconds". Other examples include combination of two pictures with and without flash, adaptive brightening the dark parts of the picture, noise reduction with a bilateral filter, and more.

The chip offers a hardware solution to some important problems in computational photography, says Michael Cohen at Microsoft Research in Redmond, Wash. "As algorithms such as bilateral filtering become more accepted as required processing for imaging, this kind of hardware specialization becomes more keenly needed," he says.

The power savings offered by the chip are particularly impressive, says Matt Uyttendaele, also of Microsoft Research. "All in all [it is] a nicely crafted component that can bring computational photography applications onto more energy-starved devices," he says.

The work was funded by the Foxconn Technology Group, based in Taiwan

The chip offers a hardware solution to some important problems in computational photography, says Michael Cohen at Microsoft Research in Redmond, Wash. "As algorithms such as bilateral filtering become more accepted as required processing for imaging, this kind of hardware specialization becomes more keenly needed," he says.

The power savings offered by the chip are particularly impressive, says Matt Uyttendaele, also of Microsoft Research. "All in all [it is] a nicely crafted component that can bring computational photography applications onto more energy-starved devices," he says.

The work was funded by the Foxconn Technology Group, based in Taiwan

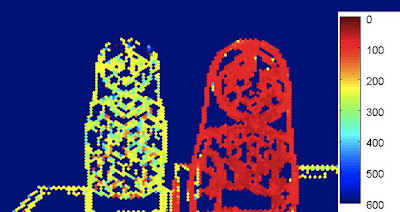

Toshiba Light Field Camera Module to have 2 to 6 MP Resolution

TechHive: Toshiba showed its recently announced light field camera to IDG News Service at its research laboratory in Kawasaki, just outside of Tokyo. The camera module measures about 8mm on each side of its cubical shape. It currently uses a 8MP image sensor to produce images with a resolution of 2MP at 30fps speed. "We use much of the data not for resolution, but for determining distance," said Hideyuki Funaki, a researcher at Toshiba. A future version of the module will use a 13MP sensor to produce light-field images with 5 or 6 MP resolution.

Toward production of the new camera modules sometime in 2014, Toshiba is working to improve its algorithms, to attain better speed and distance accuracy as well as lessen the load on a phone’s central processor.

Update: Toshiba Review, Oct. 2012 published a paper on this camera (in Japanese).

Toward production of the new camera modules sometime in 2014, Toshiba is working to improve its algorithms, to attain better speed and distance accuracy as well as lessen the load on a phone’s central processor.

|

| The image from Toshiba light field camera shows the distance of two dolls by color, with the scale marked in millimeters |

Update: Toshiba Review, Oct. 2012 published a paper on this camera (in Japanese).

Tuesday, February 26, 2013

Aptina Announces Clarity+ Technology

Business Wire: Aptina announces Clarity+ technology that departs from the traditional RGB color filter approach to achieve more than double camera sensitivity and dramatically increase color and detail in low lit scenes. Clarity+ technology is said to improve performance of 1.1um sensors to surpass today’s top 1.4um BSI pixel sensors. Further, leveraging Clarity+ technology with future generations of pixels, including 0.9um pixels, will power mobile phones to greater than 20-Megapixels.

Attempts to advance the standard Bayer pattern, which has been the standard since the 1970s, have not been successful until now. Aptina Clarity+ technology combines CFA, sensor design and algorithm developments and is fully compatible with today’s standard sub-sampling and defect correction algorithms, which means no visible imaging artifacts are introduced. While BSI technology innovation drove 1.4um pixel adoption, Clarity+ technology will drive 1.1um pixel adoption into the smartphone market. Additionally, this technology works seamlessly with 4th generation Aptina MobileHDR technology, increasing DR for both snapshot and video captures by as much as 24dB.

"Clarity+ technology enables a substantial improvement in picture clarity when capturing images with mobile cameras. While many others have introduced technologies that increase the sensitivity of cameras with clear pixel or other non-Bayer color patterns and processing, Aptina’s Clarity+ technology achieves a doubling of sensitivity, but uniquely without the introduction of annoying imaging artifacts," said Bob Gove, President and CTO at Aptina. "This optimized performance is enabled by innovations in the sensor and color filter array design, along with advancements in control and image processing algorithms. Our total system approach to innovation has led prominent OEMs to confirm that Clarity+ technology has clearly succeeded in producing a new level of performance for 1.1um pixel image sensors. We see Clarity+ technology playing a significant role in our future products, with initial products in the Smartphone markets."

Aptina will incorporate this innovative technology into a complete family of 1.1um and 1.4um based products addressing both front and rear facing applications. The AR1231CP 12MP, 1.1um mobile image sensor is the first sensor to support Clarity+ technology. This 1/3.2-inch BSI sensor 60fps at full resolution and supports 4K video at 30fps. The Aptina AR1231CP is now sampling.

Attempts to advance the standard Bayer pattern, which has been the standard since the 1970s, have not been successful until now. Aptina Clarity+ technology combines CFA, sensor design and algorithm developments and is fully compatible with today’s standard sub-sampling and defect correction algorithms, which means no visible imaging artifacts are introduced. While BSI technology innovation drove 1.4um pixel adoption, Clarity+ technology will drive 1.1um pixel adoption into the smartphone market. Additionally, this technology works seamlessly with 4th generation Aptina MobileHDR technology, increasing DR for both snapshot and video captures by as much as 24dB.

"Clarity+ technology enables a substantial improvement in picture clarity when capturing images with mobile cameras. While many others have introduced technologies that increase the sensitivity of cameras with clear pixel or other non-Bayer color patterns and processing, Aptina’s Clarity+ technology achieves a doubling of sensitivity, but uniquely without the introduction of annoying imaging artifacts," said Bob Gove, President and CTO at Aptina. "This optimized performance is enabled by innovations in the sensor and color filter array design, along with advancements in control and image processing algorithms. Our total system approach to innovation has led prominent OEMs to confirm that Clarity+ technology has clearly succeeded in producing a new level of performance for 1.1um pixel image sensors. We see Clarity+ technology playing a significant role in our future products, with initial products in the Smartphone markets."

Aptina will incorporate this innovative technology into a complete family of 1.1um and 1.4um based products addressing both front and rear facing applications. The AR1231CP 12MP, 1.1um mobile image sensor is the first sensor to support Clarity+ technology. This 1/3.2-inch BSI sensor 60fps at full resolution and supports 4K video at 30fps. The Aptina AR1231CP is now sampling.

NIT Extends its HDR Product Range to InGaAs

NIT announces WiDy SWIR camera based on InGaAs sensor operating in 900nm - 1700nm range. WiDy SWIR camera uses an InGaAs PD array of 320x256 25um pixels coupled to the NIT NSC0803 WDR ROIC. The DR range of the camera is said to exceed 140dB.

Here is how the world looks in 900-1700nm band:

A Youtube video shows the DR:

Here is how the world looks in 900-1700nm band:

A Youtube video shows the DR:

Sony and Stanford University Develop Compressive Sensing Imager

IEEE Spectrum: Oike from Sony and Stanford University Prof. El Gamal design a compressive image sensor. 256- by 256-pixel image sensor contains associated electronics that can sum random combinations of analog pixel values as it’s making its A-to-D conversions. The digital output of this chip is thus already in compressed form. Depending on how it’s configured, the chip can slash energy consumption by as much as a factor of 15.

The chip is not the first to perform random-pixel summing electronically, but it is the first to capture many different random combinations simultaneously, doing away with the need to take multiple images for each compressed frame. This is a significant accomplishment, according to other experts. "It’s a clever implementation of the compressed-sensing idea," says Richard Baraniuk, a professor of electrical and computer engineering at Rice University, in Houston, and a cofounder of InView Technology.

The chip is not the first to perform random-pixel summing electronically, but it is the first to capture many different random combinations simultaneously, doing away with the need to take multiple images for each compressed frame. This is a significant accomplishment, according to other experts. "It’s a clever implementation of the compressed-sensing idea," says Richard Baraniuk, a professor of electrical and computer engineering at Rice University, in Houston, and a cofounder of InView Technology.

Monday, February 25, 2013

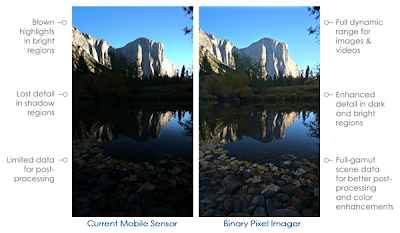

Rambus Announces Binary Pixel Technology

Business Wire: Rambus announces Binary Pixel technology including image sensor and image processing architectures with single-shot HDR and improved low-light sensitivity in video and snapshot modes.

"Today’s compact mainstream sensors are only able to capture a fraction of what the human eye can see," said Dr. Martin Scott, CTO at Rambus. "Our breakthrough binary pixel technology enables a tremendous performance improvement for compact imagers capable of ultra high-quality photos and videos from mobile devices."

This binary pixel technology is optimized at the pixel level to sense light similar to the human eye while maintaining comparable form factor, cost and power of today’s mobile and consumer imagers. The sensor is said to be optimized at the pixel level to deliver DSLR-level dynamic range from mobile and consumer cameras. The Rambus binary pixel has been demonstrated in a proof-of-concept test-chip and the technology is currently available for integration into future mobile and consumer image sensors.

Benefits of binary pixel technology:

Rambus published a demo video showing its Digital Pixel capabilities.

"Today’s compact mainstream sensors are only able to capture a fraction of what the human eye can see," said Dr. Martin Scott, CTO at Rambus. "Our breakthrough binary pixel technology enables a tremendous performance improvement for compact imagers capable of ultra high-quality photos and videos from mobile devices."

This binary pixel technology is optimized at the pixel level to sense light similar to the human eye while maintaining comparable form factor, cost and power of today’s mobile and consumer imagers. The sensor is said to be optimized at the pixel level to deliver DSLR-level dynamic range from mobile and consumer cameras. The Rambus binary pixel has been demonstrated in a proof-of-concept test-chip and the technology is currently available for integration into future mobile and consumer image sensors.

Benefits of binary pixel technology:

- Improved image quality optimized at the pixel level

- Single-shot HDR photo and video capture operates at high-speed frame-rates

- Improved signal-to-noise performance in low-light conditions

- Extended dynamic range through variable temporal and spatial oversampling

- Silicon-proven technology for mobile form factors

- Easily integratable into existing SoC architectures

- Compatible with current CMOS image sensor process technology

Rambus published a demo video showing its Digital Pixel capabilities.

Compound Eyes: from Biology to Technology

Compound Eyes: from Biology to Technology workshop is to be held in Tübingen, Germany on March 26-28, 2013. The workshop will bring together students, researchers, and industry from Neuroscience, Perception, Microsystems Technology, Applied Optics, and Robotics to discuss recent developments in the understanding of compound-eye imaging, the construction of artificial compound eyes, and the application of artificial compound eyes in robotics or ubiquitous computing. It will include a presentation and live demos of the CURVACE prototypes.

Sessions:

Sessions:

- Compound Optics and Imaging

- Motion detection and Circuits

- Optic Flow

- Applications

ST Enters ToF Sensor Market

ST announces VL6180 proximity sensor for smartphones offering "unprecedented accuracy and reliability in calculating the distance between the smartphone and the user". Instead of estimating distance by measuring the amount of light reflected back from the object, which is significantly influenced by color and surface, the sensor precisely measures the time the light takes to travel to the nearest object and reflect back to the sensor. This ToF approach ignores the amount of light reflected back and only considers the time for the light to make the return journey.

ST’s solution is an infra-red emitter that sends out light pulses, a fast light detector that picks up the reflected pulses, and electronic circuitry that accurately measures the time difference between the emission of a pulse and the detection of its reflection. Combining three optical elements in a single compact package, the VL6180 is the first member of ST’s FlightSense family and uses the ToF technology.

"This marks the first time that Time-of-Flight technology has been made available in a form factor small enough to integrate into the most space-constrained smartphones," said Arnaud Laflaquière, GM of ST’s Imaging Division. "This technology breakthrough brings a major performance enhancement over existing proximity sensors, solving the face hang-up issues of current smartphone and also enabling new innovative ways for users to interact with their devices."

Update: ST backgrounder says that ST roadmap includes 2D and 3D ToF sensors. No other detail is disclosed.

ST’s solution is an infra-red emitter that sends out light pulses, a fast light detector that picks up the reflected pulses, and electronic circuitry that accurately measures the time difference between the emission of a pulse and the detection of its reflection. Combining three optical elements in a single compact package, the VL6180 is the first member of ST’s FlightSense family and uses the ToF technology.

"This marks the first time that Time-of-Flight technology has been made available in a form factor small enough to integrate into the most space-constrained smartphones," said Arnaud Laflaquière, GM of ST’s Imaging Division. "This technology breakthrough brings a major performance enhancement over existing proximity sensors, solving the face hang-up issues of current smartphone and also enabling new innovative ways for users to interact with their devices."

Update: ST backgrounder says that ST roadmap includes 2D and 3D ToF sensors. No other detail is disclosed.

Aptina Announces 1.1um and 1.4um Pixel TSMC-Manufactured Sensors

Business Wire: It's official now: Aptina uses TSMC 300mm wafer process. Aptina announces TSMC-manufactured 1080p/60fps video sensor with HDR capability. The 1/6-inch AR0261 features a 1.4um pixel and 4th generation MobileHDR technology. Additionally, gesture recognition and 3-D video capture are possible with AR0261. Accurate gesture recognition can be enabled by the fast frame rate, while Aptina’s 3-D technology synchronizes two AR0261 output data streams to work collaboratively for 3-D.

"Designers choosing the AR0261 will find new and exciting ways to enable users to interact with their favorite devices," said Aptina’s Roger Panicacci, VP of Product Development. "Gesture recognition, 3-D and HDR will open a whole new world of personal interaction with these devices."

The AR0261 is sampling now and will be in production by summer of 2013.

Business Wire: Aptina announces 12MP AR1230 and 13MP AR1330 mobile sensors featuring 1.1um pixels. Both sensors feature 4th generation MobileHDR technology which is said to increase DR as much as 24dB. The AR1230 captures 4K video at 30fps as well as 1080P video up to 96fps. The AR1330 provides electronic image stabilization support in 1080P mode while capturing video in both 4K and 4K Cinema formats at 30fps. Additionally, both sensors support advanced features like super slow motion video, new zoom methodologies, computational array imaging and 3D image capture.

"Built on Aptina’s smallest, and most advanced 1.1-micron pixel technology, the AR1230 and AR1330 image sensors provide the high resolution, impressive low-light sensitivity, and advanced features manufacturers of high end Smartphone are looking for," said Gennadiy Agranov, Pixel CTO and VP at Aptina. "These sensors are the first of a family of new, high quality 1.1-micron based products being sampled by our customers, and which Aptina will be delivering in the coming years."

The AR1230 is now in production and is available for mass production orders immediately. The TSMC-manufactured AR1330 is sampling now and will be in production by summer of 2013.

"Designers choosing the AR0261 will find new and exciting ways to enable users to interact with their favorite devices," said Aptina’s Roger Panicacci, VP of Product Development. "Gesture recognition, 3-D and HDR will open a whole new world of personal interaction with these devices."

The AR0261 is sampling now and will be in production by summer of 2013.

Business Wire: Aptina announces 12MP AR1230 and 13MP AR1330 mobile sensors featuring 1.1um pixels. Both sensors feature 4th generation MobileHDR technology which is said to increase DR as much as 24dB. The AR1230 captures 4K video at 30fps as well as 1080P video up to 96fps. The AR1330 provides electronic image stabilization support in 1080P mode while capturing video in both 4K and 4K Cinema formats at 30fps. Additionally, both sensors support advanced features like super slow motion video, new zoom methodologies, computational array imaging and 3D image capture.

"Built on Aptina’s smallest, and most advanced 1.1-micron pixel technology, the AR1230 and AR1330 image sensors provide the high resolution, impressive low-light sensitivity, and advanced features manufacturers of high end Smartphone are looking for," said Gennadiy Agranov, Pixel CTO and VP at Aptina. "These sensors are the first of a family of new, high quality 1.1-micron based products being sampled by our customers, and which Aptina will be delivering in the coming years."

The AR1230 is now in production and is available for mass production orders immediately. The TSMC-manufactured AR1330 is sampling now and will be in production by summer of 2013.

ISSCC 2013 Report - Part 4

Albert Theuwissen continues his reports from ISSCC 2013. Part 4 covers two papers:

- "A 3D vision 2.1 Mpixels image sensor for single-lens camera systems", by S.Koyama of Panasonic

- "A 187.5 uVrms read noise 51 mW 1.4 Mpixel CMOS image sensor with PMOSCAP column CDS and 10b self-differential offset-cancelled pipeline SAR-ADC", by J. Deguchi of Toshiba

Toshiba Announces Low Power Innovations, Presents them at ISSCC

Business Wire: Toshiba announces a CMOS sensor technology with a small area and low power pixel readout circuits. A sample sensor embedded with the readout circuits shows double the performance of a conventional one (compared using FOM = power x noise / (pixels x frame rate)). Toshiba presented this development at ISSCC 2013 in San Francisco, CA on Feb. 20.

Toshiba has developed three key technologies to overcome these challenges:

Toshiba has developed three key technologies to overcome these challenges:

- Column CDS circuits primarily made up of area-efficient PMOS capacitors. The area of the CDS circuits is reduced to about half that of conventional circuits.

- In the readout circuits, a level shift function is simultaneously achieved by a capacitive coupling through the PMOS capacitors, allowing adjustment of the signal dynamic range between the column CDS circuits and the PGA and the ADC. This achieves low power and low voltage implementation of the PGA and ADC, reducing their power consumption by 40%.

- Implementation of a low power switching procedure in the ADC suited to processing the pixel signals of CMOS image sensors. This reduces the switching power consumption of the ADC by 80%.

Pelican Imaging Capabilities

Pelican Imaging updated its web site, logo, Technology page and published a new Youtube video:

Update: PRWeb: Pelican Imaging will be giving private demonstrations in Barcelona, February 25-28, 2013. Pelican Imaging’s camera is said to be 50% thinner than existing mobile cameras, and allows users to perform a range of selective focus and edits, both pre- and post-capture.

"Our technology is truly unique and radically different than the legacy approach. Our solution gives users a way to interact with their images and video in wholly new ways," said Pelican Imaging CEO and President Christopher Pickett. "We think users are going to be blown away by the freedom to refocus after the fact, focus on multiple subjects, segment objects, take linear depth measurements, apply filters, change backgrounds, and easily combine photos, from any device."

Update: PRWeb: Pelican Imaging will be giving private demonstrations in Barcelona, February 25-28, 2013. Pelican Imaging’s camera is said to be 50% thinner than existing mobile cameras, and allows users to perform a range of selective focus and edits, both pre- and post-capture.

"Our technology is truly unique and radically different than the legacy approach. Our solution gives users a way to interact with their images and video in wholly new ways," said Pelican Imaging CEO and President Christopher Pickett. "We think users are going to be blown away by the freedom to refocus after the fact, focus on multiple subjects, segment objects, take linear depth measurements, apply filters, change backgrounds, and easily combine photos, from any device."

Sunday, February 24, 2013

Scientific Detector Workshop 2013

Scientific Detector Workshop is to be held on Oct 7-11, 2013 in Florence, Italy.

Papers will be presented on the following topics:

Thanks to AT for the link!

Papers will be presented on the following topics:

- Status and plans for astronomical facilities and instrumentation (ground & space)

- Earth and Planetary Science missions and instrumentation

- Laboratory instrumentation (physical chemistry, synchrotrons, etc.)

- Detector materials (from Si and HgCdTe to strained layer superlattices)

- Sensor architectures – CCD, monolithic CMOS, hybrid CMOS

- Sensor electronics

- Sensor packaging and mosaics

- Sensor testing and characterization

- Mark McCaughrean & Mark Clampin "Space Astronomy Needs"

- Bonner Denton "Laboratory Instrumentation"

- Roland Bacon "MUSE - Example of Imaging Spectroscopy"

- Ian Baker & Johan Rothman "HgCdTe APDs"

- Jean Susini "Synchrotrons"

- Rolf Kudritzki "Stellar Astrophysics: Perspectives on the Evolution of Detectors"

- Jim Gunn "Why Imaging Spectroscopy?"

- Harald Michaelis "Planetary Science"

- Robert Green "Imaging Spectroscopy for Earth Science and Exoplanet Exploration"

Thanks to AT for the link!

ISSCC 2013 Report - Part 3

Albert Theuwissen continues reports from ISSCC 2013. The third part talks about three fast sensors:

- L. Braga (FBK, Trento) "An 8×16 pixel 92kSPAD time-resolved sensor with on-pixel 64 ps 12b TDC and 100MS/s real-time energy histogramming in 0.13 um CIS technology for PET/MRI applications"

- C. Niclass (Toyota) "A 0.18 um CMOS SoC for a 100m range, 10 fps 200×96 pixel Time of Flight depth sensor"

- O. Shcherbakova (University of Trento) "3D camera based on linear-mode gain-modulated avalanche photodiodes"

Saturday, February 23, 2013

JHU Mark Foster Develops 100Mfps Continuously Recording Camera

Mark Foster, an assistant professor in the Department of Electrical and Computer Engineering at Johns Hopkins’ Whiting School of Engineering has developed a system that can continuously record images at a rate of more than 100 million frames per second and has been awarded the National Science Foundation’s Faculty Early Career Development (CAREER) Award, a five-year, $400,000 grant. The resolution of the camera is not stated.

Foster, who came to Johns Hopkins in 2010, works in the area of non-linear optics and ultra-faster lasers – measuring phenomena that occur in femtoseconds. "With this project, we hope to create the fastest video device ever created," he explained.

Unfortunately, Mark Foster's home page has no explanation on how the new camera works. His publications page mostly links to high-speed optical communication papers, rather than imaging.

Foster, who came to Johns Hopkins in 2010, works in the area of non-linear optics and ultra-faster lasers – measuring phenomena that occur in femtoseconds. "With this project, we hope to create the fastest video device ever created," he explained.

Unfortunately, Mark Foster's home page has no explanation on how the new camera works. His publications page mostly links to high-speed optical communication papers, rather than imaging.

ISSCC 2013: Panasonic Single-Lens 3D Imager

Tech-On: Panasonic presents 2.1MP image sensor implementing 3D imaging with a single lens:

Panasonic plans to use this technology in industrial and mobile products appearing in 2014.

|

| DML=Digital MicroLens, made with patterns smaller than wavelength |

Panasonic plans to use this technology in industrial and mobile products appearing in 2014.

Aptina and Sony Support Nvidia Chimera Technology

Nvidia has recently announced a name to its Computational Photography Architecture - Chimera, used in Tegra 4 family of application processors. HDR is the main differentiation of this technology, including hardware-assisted HDR panorama stitching. The image sensor partners announced at the launch are Sony with IMX135 13 MP stacked sensor and Aptina with AR0833 1/3-inch 8MP BSI sensor.

Business Wire: Aptina says that its MobileHDR technology enables Chimera architecture support. MobileHDR technology is integrated into a number of Aptina’s high-end current and future mobile products including the AR0835 (8MP), AR1230 (12MP), and the AR1330 (13MP).

Business Wire: Aptina says that its MobileHDR technology enables Chimera architecture support. MobileHDR technology is integrated into a number of Aptina’s high-end current and future mobile products including the AR0835 (8MP), AR1230 (12MP), and the AR1330 (13MP).

Fraunhofer Adapts Gesture Recognition to Car Quality Control

Researchers in Fraunhofer Institute for Optronics, System Technologies and Image Exploitation (IOSB) in Karlsruhe, Germany developed an efficient type of quality control: With a pointing gesture, employees can input any detected defects to car body parts into the inspection system. The 2D and 3D cameras fusion system was developed on behalf of the BMW Group:

|

| A point of the finger is all it takes to send the defect in the paint to the QS inspection system, store it and document it. |

Friday, February 22, 2013

ISSCC 2013 Report - Part 2

Albert Theuwissen continues his excellent ISSCC report series. The second part talks about stacked sensors:

Olympus presented "A rolling-shutter distortion-free 3D stacked image sensor with -160 dB parasitic light sensitivity in-pixel storage node", by J. Aoki

Sony presented "A 1/4-inch 8M pixel back-illuminated stacked CMOS image sensor" by S. Sukegawa

From what Albert writes, I'd bet that Olympus was granted an early access to Sony stacked sensor process, so that both presentations rely on the same technology.

Olympus presented "A rolling-shutter distortion-free 3D stacked image sensor with -160 dB parasitic light sensitivity in-pixel storage node", by J. Aoki

Sony presented "A 1/4-inch 8M pixel back-illuminated stacked CMOS image sensor" by S. Sukegawa

From what Albert writes, I'd bet that Olympus was granted an early access to Sony stacked sensor process, so that both presentations rely on the same technology.

ISSCC 2013: Sony Stacked Sensor Presentation

Tech-On published an article and few slides from the Sony 8MP, 1.12um-pixel stacked sensor presentation at ISSCC 2013. The 90nm-processed pixel layer has only high voltage transistors and also contains row drivers and the comparator part of the column-parallel ADCs, with TSV interconnects on the periphery. The bottom logic area is processed in 65nm with LV and HV transistors, and integrates the counter portion of the column ADCs, row decoders, ISP, timing controls, etc.

Sony does not tell the exact details of its TSV processing, but shows the stacked chips cross-section:

The sensor spec slide mentions 5Ke full well - very impressive for a 1.12um pixel:

Tech-On article shows many more slides from the presentation.

Sony does not tell the exact details of its TSV processing, but shows the stacked chips cross-section:

The sensor spec slide mentions 5Ke full well - very impressive for a 1.12um pixel:

Tech-On article shows many more slides from the presentation.

Image Sensor Sales to Reach $10.75 Billion in 2018

PRNewswire: "Image Sensors Market Analysis & Forecast (2013 - 2018): By Applications (Healthcare (Endoscopy, Radiology, Ophthalmology), Surveillance, Automobile, Consumer, Defense, Industrial)); Technology (CCD, CMOS, Contact IS, Infrared, X-Ray) And Geography", published by MarketsandMarkets, the value of image sensor market was $8.00 billion in 2012 and is expected to reach $10.75 billion in 2018, at an estimated CAGR of 3.84% from 2013 to 2018. For comparison, the global semiconductor sales in 2012 were $291.6 billion, according to SIA. In terms of volume, the total number of image sensors shipped is estimated to be 1.6 billion in 2013 and is expected to reach 3 billion by 2018.

The key statements in the report:

The key statements in the report:

- The global image sensors market is estimated to grow at a modest CAGR of 3.84% from 2013 till 2018 and is expected to cross $10.75 billion by the end of these five years.

- Currently, camera mobile phones are the major contributors to this market. In 2012, approximately, 80% of the image sensors were shipped for this application

- Emerging applications such as medical imaging, POV cameras, UAV cameras, digital radiology, and factory automation factors are driving the market with their high growth rate. However, they are low volume applications and would require longer duration to impact the market heavily

- CMOS technology commands a major market share against CCD and contact image sensor types. In 2012, CMOS approximately had 85% of the share

- Apart from the visible spectrum domain, manufacturers are also focusing on the infrared and X-ray image sensors. By the year 2018, approximately 800,000 units of infrared sensors and 300,000 units of X-ray sensors are forecasted to be shipped

- Currently, North America holds the largest share, but APAC is expected to surpass in 2013 with its strong consumer electronics market

|

| Image sensor sales by region |

Thursday, February 21, 2013

Nikon DSLR Abandons OLPF

Nikon follows Fujifilm steps and abandoning optical low-pass filter in its new 24.1MP DX-format DSLR D7100. Nikon's PR says: "The innovative sensor design delivers the ultimate in image quality by defying convention; because of the high resolution and advanced technologies, the optical low pass filter (OLPF) is no longer used. Using NIKKOR lenses, the resulting images explode with more clarity and detail to take full advantage of the 24.1-megapixel resolution achieved with D7100’s DX-format CMOS sensor." No further details of these "advanced technologies" are given so far.

ISSCC 2013 Report - Part 1

Albert Theuwissen published the first part of his report from ISSCC 2013. So far two presentations are covered:

"A 3.4 uW CMOS image sensor with embedded feature-extraction algorithm for motion-triggered object-of-interest imaging" by J. Choi

"A 467 nW CMOS visual motion sensor with temporal averaging and pixel aggregation" by G. Kim

"A 3.4 uW CMOS image sensor with embedded feature-extraction algorithm for motion-triggered object-of-interest imaging" by J. Choi

"A 467 nW CMOS visual motion sensor with temporal averaging and pixel aggregation" by G. Kim

New Market: Set-top Box with Camera

Electronics Weekly, AllThingsD: Intel intends to sell set top boxes equipped with a camera. It's said be able to identify each individual watcher in front of a domestic TV. The information gathered will allow companies to target advertising at specific telly-watching individual. The box will be sold by mysterious Intel Media division. Erik Huggers, VP of Intel Media, says that it delivers a TV which "actually cares about who you are". Intel says several hundred of its employees are currently testing the box in their homes and it hopes to launch the service this year.

In order to make a reliable recognition of a watcher, the STB camera needs to have quite a high resolution and a good low light sensitivity. If Intel's approach is widely adopted, STBs might become a next big market for image sensors.

In order to make a reliable recognition of a watcher, the STB camera needs to have quite a high resolution and a good low light sensitivity. If Intel's approach is widely adopted, STBs might become a next big market for image sensors.

Microsoft Sold 24M Kinects

On Feb 11, 2013 Microsoft announced that so far it has sold 24M Kinect units since it has been introduced in Nov. 2010. The previous sales announcements were:

8M units in the first 60 days of sales (Nov-Dec 2010).

18M in a year from the launch (Jan. 2012, Reuters)

So, it appears to be a significant slowdown in Kinect sales.

8M units in the first 60 days of sales (Nov-Dec 2010).

18M in a year from the launch (Jan. 2012, Reuters)

So, it appears to be a significant slowdown in Kinect sales.

Wednesday, February 20, 2013

poLight Shows Touch and Refocus Application

In this Vimeo video poLight's Marketing & Sales VP François Vieillard shows a new application for the company's AF actuator:

Kohzu Announces Precision Sensor Alignment, Image Stabilization Tester

Japan-based Kohzu announces two new products: an Image Sensor 6-axes Alignment Unit and an image stabilization tester.

The aligner can position an image sensor with accuracy of 1.5um in X-Y direction, 3um in depth, 0.1arc-minute in tilt and 3 arc-minute in rotation:

The 2-axes (Pitch, Yaw) Blur Vibration Simulator is said to be compliant with CIPA rules (CIPA DC-011-2012 "Measurement method and notation method related with offset function of blur vibration of digital camera"):

The aligner can position an image sensor with accuracy of 1.5um in X-Y direction, 3um in depth, 0.1arc-minute in tilt and 3 arc-minute in rotation:

The 2-axes (Pitch, Yaw) Blur Vibration Simulator is said to be compliant with CIPA rules (CIPA DC-011-2012 "Measurement method and notation method related with offset function of blur vibration of digital camera"):

JKU Announces Paper on its Transparent Sensor

Eureka, Business Wire: JKU and Optics Express announce a new paper "Towards a transparent, flexible, scalable and disposable image sensor using thin-film luminescent concentrators" by Alexander Koppelhuber and Oliver Bimber. The approach has already been covered in this blog. Alexander Koppelhuber's MSc thesis on the same matter can be downloaded here.

Tuesday, February 19, 2013

DigitalOptics Corporation Launches mems|cam

Business Wire: DOC announces the first members of its MEMS-AF enabled camera module family: three 8MP cameras based on Omnivision and Sony 1.4um pixel sensors:

DOC’s mems|cam modules are said to deliver industry leading AF speed. A fast settling time (typically less than 10 ms) combined with precise location awareness results from MEMS technology. DOC’s MEMS autofocus actuators operate on less than 1mW of power(roughly 1% of VCM), thereby extending battery life and reducing thermal load on the image sensor, lens, and adjacent critical components. Manufactured with semiconductor processes, DOC’s silicon actuators deliver precise repeatability, negligible hysteresis, and millions of cycles of longevity, ensuring high quality image and video capture for the life of a product. Combined with DOC’s optics design and flip chip packaging, the camera module z-height is just 5.1mm.

DigitalOptics is initially targeting smartphone OEMs in China for its mems|cam modules. "Smartphone OEMs in China are driving innovative new form factors, features, and camera functionality," said Jim Chapman, SVP sales and marketing at DigitalOptics Corporation. "These OEMs recognize the speed, power, and precision advantages of mems|cam relative to existing VCM camera modules."

"We have a strategic relationship with DigitalOptics for mems|cam modules, having recognized the potential advantages of implementing a mems|cam module into our handsets," said Zeng Yuan Qing, vice general manager of Guangdong Oppo Mobile Telecommunications Corp., Ltd, a Chinese smartphone OEM.

Thanks to SF and LM for the links!

DOC’s mems|cam modules are said to deliver industry leading AF speed. A fast settling time (typically less than 10 ms) combined with precise location awareness results from MEMS technology. DOC’s MEMS autofocus actuators operate on less than 1mW of power(roughly 1% of VCM), thereby extending battery life and reducing thermal load on the image sensor, lens, and adjacent critical components. Manufactured with semiconductor processes, DOC’s silicon actuators deliver precise repeatability, negligible hysteresis, and millions of cycles of longevity, ensuring high quality image and video capture for the life of a product. Combined with DOC’s optics design and flip chip packaging, the camera module z-height is just 5.1mm.

DigitalOptics is initially targeting smartphone OEMs in China for its mems|cam modules. "Smartphone OEMs in China are driving innovative new form factors, features, and camera functionality," said Jim Chapman, SVP sales and marketing at DigitalOptics Corporation. "These OEMs recognize the speed, power, and precision advantages of mems|cam relative to existing VCM camera modules."

"We have a strategic relationship with DigitalOptics for mems|cam modules, having recognized the potential advantages of implementing a mems|cam module into our handsets," said Zeng Yuan Qing, vice general manager of Guangdong Oppo Mobile Telecommunications Corp., Ltd, a Chinese smartphone OEM.

Thanks to SF and LM for the links!

HTC Announces Ultrapixel Camera

HTC One smartphone features UltraPixel camera "that includes a best-in-class f/2.0 aperture lens and a breakthrough sensor with UltraPixels that gather 300 percent more light than traditional smartphone camera sensors. This new approach also delivers astounding low-light performance and a variety of other improvements to photos and videos."

The camera spec does not tell how many pixels the camera has, saying instead that the sensor is of BSI type, has 2.0um pixels and 1/3-inch format. The camera has F2.0 lens with OIS. Both front and back cameras support HDR in stills and video mode.

Once we talk about HDR, the new Nvidia's Tegra 4i is the second application processor that supports "always-on HDR" imaging.

Update: CNET published HTC's director of special projects Symon Whitehorn comments on why HTC flagship smartphone has only 4MP resolution: "It's a risk, it's definitely a risk that we're taking. Doing the right thing for image quality, it's a risky thing to do, because people are so attached to that megapixel number."

HTC Ultrapixel page shows few illustrations:

HTC marketing efforts are aimed against high megapixel sensors:

Typical Smartphone CameraDifference

HTC One camera spec:

The camera spec does not tell how many pixels the camera has, saying instead that the sensor is of BSI type, has 2.0um pixels and 1/3-inch format. The camera has F2.0 lens with OIS. Both front and back cameras support HDR in stills and video mode.

Once we talk about HDR, the new Nvidia's Tegra 4i is the second application processor that supports "always-on HDR" imaging.

Update: CNET published HTC's director of special projects Symon Whitehorn comments on why HTC flagship smartphone has only 4MP resolution: "It's a risk, it's definitely a risk that we're taking. Doing the right thing for image quality, it's a risky thing to do, because people are so attached to that megapixel number."

HTC Ultrapixel page shows few illustrations:

|

| HTC One vs iPhone 5 comparison |

HTC marketing efforts are aimed against high megapixel sensors:

Typical Smartphone CameraDifference

| HTC Zoe™ Camera with UltraPixels | ||

|---|---|---|

| Lens with F2.0 aperture | Lens with F2.8 aperture | 1.96x more light entry than F2.8 |

| 2.0um pixel size | ~1.4um pixel size (on typical 8MP sensors) ~1.1um pixel size (on typical 13MP sensors) | 2.04x more sensitivity than 1.4um 3.31x more sensitivty than 1.1um |

| 2-axis optical image stabilizer | 2-axis optical image stabilizer | Allowing longer exposure with more stability, resulting in higher quality photos with lower noise and better lowlight sensitivity |

| Real-time hardware HDR for video (~84db) | No video HDR (~54db) | ~1.5x more dynamic range with 84db compared to 54db in competitions |

HTC One camera spec:

| Sensor Type | CMOS BSI |

|---|---|

| Sensor Size | 1/3' |

| Sensor Pixel Size | 2um X 2um |

| Camera Full Size Resolution | 2688 x 1520 16:9 ratio JPEG Shutter speed up to 48fps with reduced motion blur |

| Video Resolutions | 1080P up to 30fps 720P up to 60fps 1080P with HDR up to 28fps 768x432 up to 96fps H.264 high profile, up to 20mbps |

| Focal Length of System | 3.82 mm |

| Optical F/# Aperture | F/2.0 |

| Number of Lens Elements | 5P |

| Optical Image Stabilizer | 2-axis, +/- 1 degree (average), 2000 cycles per second |

| ImageChip / ISP Enhancements | HTC continuous autofocus algorithm (~200ms), De-noise algorithm, color shading for lens compensation |

| Maximum frames per second | Up to 8fps continuous shooting |

Monday, February 18, 2013

Adrian Tang on THz Imaging Limitations

Adrian Tang from JPL talks about THz imaging limitations (both transmissive and reflective imaging):

Sunday, February 17, 2013

RFEL Offers FPGA-based Video Stabilization IP

UK-based RFEL announces Video Stabilization IP Core, designed to work on all suitable major FPGAs, although additional performance is available for Xilinx Zynq-7000 SoC devices only. The Stabilization Core applications examples include: driving aids for military vehicles, diverse airborne platforms, targeting systems and remote border security cameras. The IP core corrects two-dimensional translations and rotations, from both static and moving platforms. The frame rates are up to 150fps for resolutions of up to 1080p including both daylight and infrared cameras. The accuracy of image stabilization, has been shown to be better than ± 1 pixels, even when subjected to random frame-to frame displacements of up to ± 25 pixels in the x and y directions and with a frame-to-frame rotational variation of up to ± 5deg.

Saturday, February 16, 2013

Junichi Nakamura Interviewed by IS2013

Image Sensors 2013 Conference in London, UK published interview with Junichi Nakamura, Director at Aptina. A few quotes:

Q: You have been involved in CMOS development since the beginning at JPL, what are the key development milestones you have witnessed?

A: "I think the key development milestones were; An introduction of the pinned photodiode (that was commonly used for the interline transfer CCDs) to the CMOS pixel, a shared pixel scheme for pixel size reduction and improvements in FPN suppression circuitry.

By the way, I consider then R&D efforts on on-chip ADC at JPL (led by Dr. Eric Fossum) was the origin of the current success of the CMOS image sensor.

Another aspect is; Big semiconductor companies acquired key start-up companies in late 1990's - early 2000's to establish CMOS image sensor business quickly. Examples include; STMicro acquired VVL, Micron acquired Photobit and Cypress acquired FillFactory.

Also, the biannual Image Sensor workshop has been playing an important role to share and discuss progress in CMOS imaging technologies."

Q: CMOS performance continues to improve with each new generation - what's the current R&D focus at Aptina?

A: "High-speed readout, while improving noise performance together, is the strength in our design. We will continue to focus on this. At the same time, our R&D group in the headquarter in San Jose focuses on pixel performance improvements."

Q: You have been involved in CMOS development since the beginning at JPL, what are the key development milestones you have witnessed?

A: "I think the key development milestones were; An introduction of the pinned photodiode (that was commonly used for the interline transfer CCDs) to the CMOS pixel, a shared pixel scheme for pixel size reduction and improvements in FPN suppression circuitry.

By the way, I consider then R&D efforts on on-chip ADC at JPL (led by Dr. Eric Fossum) was the origin of the current success of the CMOS image sensor.

Another aspect is; Big semiconductor companies acquired key start-up companies in late 1990's - early 2000's to establish CMOS image sensor business quickly. Examples include; STMicro acquired VVL, Micron acquired Photobit and Cypress acquired FillFactory.

Also, the biannual Image Sensor workshop has been playing an important role to share and discuss progress in CMOS imaging technologies."

Q: CMOS performance continues to improve with each new generation - what's the current R&D focus at Aptina?

A: "High-speed readout, while improving noise performance together, is the strength in our design. We will continue to focus on this. At the same time, our R&D group in the headquarter in San Jose focuses on pixel performance improvements."

Friday, February 15, 2013

TSMC Proposes Stacked BSI Process

TSMC patent application US20130020662 "Novel CMOS Image Sensor Structure" by Min-Feng KAO, Dun-Nian Yaung, Jen-Cheng Liu, Chun-Chieh Chuang, and Wen-De Wang presents a stacked sensor process. 3 wafers are bonded together in a single stack: a BSI sensing wafer 30, a circuit and interconnect wafer 270+360, and a carrier wafer 400:

|

| TSMC Stacked Sensor (features not in scale) |

Thursday, February 14, 2013

Two JKU Videos

Johannes Kepler University, Linz, Austria publishes a Youtube video on its flexible sensor technology:

Another JKU video talks about challenges in rendering of high resolution lightfield images:

Another JKU video talks about challenges in rendering of high resolution lightfield images:

Wednesday, February 13, 2013

Chipworks Publishes Sony Stacked Sensor Reverse Engineering Report

Chipworks got a hold of Sony stacked sensor, the ISX014. The 8MP sensor features 1.12um pixels and integrated a high speed ISP.

Chipworks says: "A thin back-illuminated CIS die (top) mounted to the companion image processing engine die and vertically connected using a through silicon via (TSV) array located adjacent to the bond pads. A closer look at the TSV array in cross section shows a series of 6.0 µm pitch vias connecting the CIS die and the image processing engine."

Thanks to RF for updating me!

|

| Cross Section Showing the TSVs |

Chipworks says: "A thin back-illuminated CIS die (top) mounted to the companion image processing engine die and vertically connected using a through silicon via (TSV) array located adjacent to the bond pads. A closer look at the TSV array in cross section shows a series of 6.0 µm pitch vias connecting the CIS die and the image processing engine."

Thanks to RF for updating me!

Tensilica Announces ISP IP, Software Alliances

Tensilica announces IVP, an imaging and video dataplane processor (DPU) for image/video signal processing in mobile handsets, tablets, DTV, automotive, video games and computer vision based applications. "Consumers want advanced imaging functions like HDR, but the shot-to-shot time with the current technology is several seconds, which is way too long. Users want it to work 50x faster. We can give consumers the instant-on, high-quality image and video capture they want," stated Chris Rowen, Tensilica’s founder and CTO.

The IVP is extremely power efficient. As an example, for IVP implemented in an automatic synthesis, P&R flow in 28nm HPM process, regular VT, a 32-bit integral image computation on 16b pixel data at 1080p30 consumes 10.8 mW. The integral image function is commonly used in applications such as face and object detection and gesture recognition.

Tensilica also announced a number of alliances with software vendors Dreamchip, Almalence, Irida Labs, and Morpho.

EETimes talks about a battle between Tensilica and Ceva imaging IP cores. No clear winnder is declared, but Tensilica IVP is said to have more processing power. Another EETimes article talks about ISP IP applications and requirements.

The IVP is extremely power efficient. As an example, for IVP implemented in an automatic synthesis, P&R flow in 28nm HPM process, regular VT, a 32-bit integral image computation on 16b pixel data at 1080p30 consumes 10.8 mW. The integral image function is commonly used in applications such as face and object detection and gesture recognition.

Tensilica also announced a number of alliances with software vendors Dreamchip, Almalence, Irida Labs, and Morpho.

|

| The IVP Core Architecture With Sample Memory Sizes Selected |

EETimes talks about a battle between Tensilica and Ceva imaging IP cores. No clear winnder is declared, but Tensilica IVP is said to have more processing power. Another EETimes article talks about ISP IP applications and requirements.

Invisage Secures Round D Funding

Market Wire: InVisage announces its Series D round of venture funding, led by GGV Capital and including Nokia Growth Partners. The undisclosed amount will be used to begin manufacturing the company's QuantumFilm image sensors, which are currently being evaluated by phone manufacturers. Devices incorporating the technology are expected to ship in Q2 2014. GGV and Nokia Growth Partners join InVisage's existing investors RockPort Capital, InterWest Partners, Intel Capital and OnPoint Technologies.

"InVisage is poised to make a tremendous impact on consumer devices and end users with its QuantumFilm image sensors," said Thomas Ng, founding partner, GGV Capital.

"The innovative QuantumFilm technology from InVisage has the potential to disrupt the market for silicon-based image sensors," said Bo Ilsoe, managing partner of Nokia Growth Partners. "Imaging remains a core investment area for NGP, and it is our belief that InVisage's technology will change how video and images are captured in consumer devices."

"The participation of new investors, including a major handset maker, in this round signals that imaging is a critical differentiator in mobile devices," said Jess Lee, Invisage CEO. "For too long, the image sensor industry has lacked innovation. We are excited to bring stunning image quality and advanced new features that will truly transform this industry."

InVisage QuantumFilm is said to be the world's most light-sensitive image sensor for smartphones. Compared to current camera technologies, the QuantumFilm is said to provide incredible performance in the smallest package, making picture-taking foolproof, even in dimly lit rooms.

"InVisage is poised to make a tremendous impact on consumer devices and end users with its QuantumFilm image sensors," said Thomas Ng, founding partner, GGV Capital.

"The innovative QuantumFilm technology from InVisage has the potential to disrupt the market for silicon-based image sensors," said Bo Ilsoe, managing partner of Nokia Growth Partners. "Imaging remains a core investment area for NGP, and it is our belief that InVisage's technology will change how video and images are captured in consumer devices."

"The participation of new investors, including a major handset maker, in this round signals that imaging is a critical differentiator in mobile devices," said Jess Lee, Invisage CEO. "For too long, the image sensor industry has lacked innovation. We are excited to bring stunning image quality and advanced new features that will truly transform this industry."

InVisage QuantumFilm is said to be the world's most light-sensitive image sensor for smartphones. Compared to current camera technologies, the QuantumFilm is said to provide incredible performance in the smallest package, making picture-taking foolproof, even in dimly lit rooms.

Tuesday, February 12, 2013

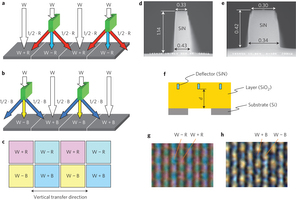

Panasonic Color Splitter Array Paper

Panasonic Color Splitting Array paper appears in open access on Readcube. The figure below explains the color splitting principle (click on image to enlarge):

New Interviews by IS2013

2013 Image Sensor Confrerence in London, UK published two new interviews. Gerhard Holst, Head of R&D, PCO, Germany talks about sCMOS sensors:

Q: sCMOS claims many advantages compared to more traditional technologies, are there any drawbacks or areas for further research?

A: "I would say that nature doesn't make it easy for you. Certainly there are features that can be improved, for example the blocking efficiency or shutter ratio can be improved, as well some cross talk and as well some lag issues could be improved, but I guess sCMOS has this in common with every new technology. I don't know any new technology, which was perfect from the start."

Q: This technology has been on the market for several years now, what have you learned over this time, and how have you optimized your camera systems?

A: "Since many of our cameras are usually used for precise measurements, we know and learned a lot about the proper control of these image sensors and how each camera has to be calibrated and each pixel has to be corrected. I will address in my presentation some of these issues and show how we have solved it. Further there some characteristics in the noise distribution, that has to be considered."

Ziv Attar, CEO of Linx Imaging talks about multi-aperture imaging:

Q: There's a lot of discussion around multi-aperture imaging right now - the concept has been around for a long time, why do you think it's a hot topic right now?

A: "...Sensors, optics and image processors have been around for quit some time now yet no array camera has been commercialized. ...Multi aperture cameras require heavy processing which was not available on mobile devices until now. 20 years ago we would have needed a super computer to process an image from a multi aperture camera. I think the timing is right due to a combination of technology matureness and market demand..."

Q: Will we see your technology in a commercialized form soon?

A: "Yes. You will. We are devoting all our resources and energy in to commercializing our technology. There are plenty of challenges related to manufacturing of the optics, sensors, module assembly and software optimization, all which require time, hard work and plenty of creativity which is what makes our life fun."

Q: sCMOS claims many advantages compared to more traditional technologies, are there any drawbacks or areas for further research?

A: "I would say that nature doesn't make it easy for you. Certainly there are features that can be improved, for example the blocking efficiency or shutter ratio can be improved, as well some cross talk and as well some lag issues could be improved, but I guess sCMOS has this in common with every new technology. I don't know any new technology, which was perfect from the start."

Q: This technology has been on the market for several years now, what have you learned over this time, and how have you optimized your camera systems?

A: "Since many of our cameras are usually used for precise measurements, we know and learned a lot about the proper control of these image sensors and how each camera has to be calibrated and each pixel has to be corrected. I will address in my presentation some of these issues and show how we have solved it. Further there some characteristics in the noise distribution, that has to be considered."

Ziv Attar, CEO of Linx Imaging talks about multi-aperture imaging:

Q: There's a lot of discussion around multi-aperture imaging right now - the concept has been around for a long time, why do you think it's a hot topic right now?

A: "...Sensors, optics and image processors have been around for quit some time now yet no array camera has been commercialized. ...Multi aperture cameras require heavy processing which was not available on mobile devices until now. 20 years ago we would have needed a super computer to process an image from a multi aperture camera. I think the timing is right due to a combination of technology matureness and market demand..."

Q: Will we see your technology in a commercialized form soon?

A: "Yes. You will. We are devoting all our resources and energy in to commercializing our technology. There are plenty of challenges related to manufacturing of the optics, sensors, module assembly and software optimization, all which require time, hard work and plenty of creativity which is what makes our life fun."

Saturday, February 09, 2013

Crowdsourcing IR Camera Project

While this is slightly off-topic for this blog, I've been following Muoptics effort at Indiegogo to collect funds for an affordable IR camera project. As far as I know, this is the first attempt to crowdsource a camera company. So far, the project has been able to collect a little over $6.5K in a week since it has started. Looks too slow to reach their $200K target till March 10th. Below is Vimeo video describing the idea:

PR.com: CTO, Charles McGrath states that, "we can get the retail price of the Mμ Thermal Imager down to $325 initially and with enough orders from the big box retailer we think we may even cut that price considerably. Another goal is to double the resolution within a year."

PR.com: CTO, Charles McGrath states that, "we can get the retail price of the Mμ Thermal Imager down to $325 initially and with enough orders from the big box retailer we think we may even cut that price considerably. Another goal is to double the resolution within a year."

Friday, February 08, 2013

Tessera DOC Gets New President

Business Wire: Tessera announces that John S. Thode has been appointed president of DigitalOptics Corporation (DOC). Thode will report to Robert A. Young, president and CEO of Tessera.

"DOC has a unique and differentiated MEMS approach to smartphone camera modules that I believe has the potential to revolutionize mobile imaging," said Thode. "I look forward to working with the team to deliver MEMS autofocus camera modules to market and to build on DOC’s emerging role in this exciting space."

Thode, 55, was most recently EVP and GM at McAfee. Before that, Thode was GM of Dell’s Mobility Products Group where he was responsible for leading the strategy and development of Dell’s nontraditional products, including smartphones and tablets. Prior to Dell, Thode was president and CEO at ISCO, a telecom infrastructure company. Thode also spent 25 years with Motorola in various management roles, including GM of its UMTS Handset Products and Personal Communications Sector and GM of its Wireless Access Systems Division in its General Telecoms Systems Sector.

Business Wire: Tessera announces Q4 and 2012 earnings. In Q4 DOC revenue was $10.2M, compared to $7.7M a year ago. The increase was due primarily to sales of fixed focus camera modules of $3.8M that occurred in 2012 but did not occur in 2011, which was partly offset by lower revenues from the company’s image enhancement technologies and weaker demand for the company’s Micro-Optics products.

Fore the full year 2012, DOC revenue was $41.1M.

Thanks to SF for the links!

"DOC has a unique and differentiated MEMS approach to smartphone camera modules that I believe has the potential to revolutionize mobile imaging," said Thode. "I look forward to working with the team to deliver MEMS autofocus camera modules to market and to build on DOC’s emerging role in this exciting space."

Thode, 55, was most recently EVP and GM at McAfee. Before that, Thode was GM of Dell’s Mobility Products Group where he was responsible for leading the strategy and development of Dell’s nontraditional products, including smartphones and tablets. Prior to Dell, Thode was president and CEO at ISCO, a telecom infrastructure company. Thode also spent 25 years with Motorola in various management roles, including GM of its UMTS Handset Products and Personal Communications Sector and GM of its Wireless Access Systems Division in its General Telecoms Systems Sector.

Business Wire: Tessera announces Q4 and 2012 earnings. In Q4 DOC revenue was $10.2M, compared to $7.7M a year ago. The increase was due primarily to sales of fixed focus camera modules of $3.8M that occurred in 2012 but did not occur in 2011, which was partly offset by lower revenues from the company’s image enhancement technologies and weaker demand for the company’s Micro-Optics products.

Fore the full year 2012, DOC revenue was $41.1M.

Thanks to SF for the links!

Eric Fossum Elected to The National Academy of Engineering

Dartmouth reports that its Professor of Engineering Eric Fossum has been elected to The National Academy of Engineering (NAE)—a part of the National Academies, which includes the NAE, the National Academy of Sciences (NAS), the Institute of Medicine (IOM), and the National Research Council (NRC). The NAE cites Fossum’s principal engineering accomplishment to be "inventing and developing the CMOS active-pixel image sensor and camera-on-a-chip."

"It is truly an honor to be recognized at this level by my fellow engineers," says Fossum. "I am regularly astonished by the many ways the technology impacts people’s lives here on Earth through products that we didn’t even imagine when it was first invented for NASA. I look forward to continuing to teach and work with the students and faculty at Dartmouth to explore the next generation of image-capturing devices."

Fossum has published more than 250 technical papers and holds over 140 U.S. patents. He is a Fellow member of the IEEE and a Charter Fellow of the National Academy of Inventors. He has received the IBM Faculty Development Award, the National Science Foundation Presidential Young Investigator Award, and the JPL Lew Allen Award for Excellence.

"This is the highest honor the engineering community bestows," says Thayer School Dean Joseph Helble, "It recognizes Eric’s seminal contributions as an engineer, technology developer, and entrepreneur. His work has enabled microscale imaging in areas that were unimaginable even a few decades ago, and has led directly to the cellphone and smartphone cameras that are taken for granted. We are honored to have someone of his caliber to oversee our groundbreaking Ph.D. Innovation Program."

Congratulations, Eric!

"It is truly an honor to be recognized at this level by my fellow engineers," says Fossum. "I am regularly astonished by the many ways the technology impacts people’s lives here on Earth through products that we didn’t even imagine when it was first invented for NASA. I look forward to continuing to teach and work with the students and faculty at Dartmouth to explore the next generation of image-capturing devices."

Fossum has published more than 250 technical papers and holds over 140 U.S. patents. He is a Fellow member of the IEEE and a Charter Fellow of the National Academy of Inventors. He has received the IBM Faculty Development Award, the National Science Foundation Presidential Young Investigator Award, and the JPL Lew Allen Award for Excellence.

"This is the highest honor the engineering community bestows," says Thayer School Dean Joseph Helble, "It recognizes Eric’s seminal contributions as an engineer, technology developer, and entrepreneur. His work has enabled microscale imaging in areas that were unimaginable even a few decades ago, and has led directly to the cellphone and smartphone cameras that are taken for granted. We are honored to have someone of his caliber to oversee our groundbreaking Ph.D. Innovation Program."

Congratulations, Eric!

CNBC: Sony to Focus on Image Sensors

Mykola Golovko, global consumer electronics industry analyst at Euromonitor International, expects Sony to move its main focus to image sensors in his interview to CNBC. Currently, 80% of Sony sensor volumes go to mobile phones, but most of the money is generated from DSLR sensor sales, Golovko says. One of the Sony challenges is to improve profits in its high volume mobile applications, Golovko says at the end of the interview (Adobe Flash player required):

Himax Reports Q4 2012 Results

Himax CEO, Jordan Wu, talks about the state of image sensor business in Q4, 2012 and beyond:

"Our CMOS image sensors delivered a phenomenal growth during the fourth quarter of 2012, mainly due to numerous design-wins in smartphone, tablet, laptop and surveillance applications. We currently offer mainstream and entry-level sensor products with pixel counts of up to 5 mega and are on track to release a new 8 mega pixel product soon. However, the Q1 prospect for CMOS image sensor looks gloomy as China market is going through correction and many of the customers adopting our new sensor products are still finishing up their product tuning.

Notwithstanding the short-term downturn, we do expect the sales of this product line to surge in 2013, boosted by shipments of our new products, many of which were only launched in the second half of last year. We also expect to break into new and leading smartphone brands and further penetrate the tablet, IP Cam, surveillance and automotive application markets."

"Our CMOS image sensors delivered a phenomenal growth during the fourth quarter of 2012, mainly due to numerous design-wins in smartphone, tablet, laptop and surveillance applications. We currently offer mainstream and entry-level sensor products with pixel counts of up to 5 mega and are on track to release a new 8 mega pixel product soon. However, the Q1 prospect for CMOS image sensor looks gloomy as China market is going through correction and many of the customers adopting our new sensor products are still finishing up their product tuning.

Notwithstanding the short-term downturn, we do expect the sales of this product line to surge in 2013, boosted by shipments of our new products, many of which were only launched in the second half of last year. We also expect to break into new and leading smartphone brands and further penetrate the tablet, IP Cam, surveillance and automotive application markets."

Omnivision and Aptina Sensors Inside Blackberry Z10

Chipworks: The Z10 smartphone that is supposed to begin Blackberry comeback, relies on Aptina's secondary front sensor and Omnivision's OV8830 BSI-2 primary sensor.

The primary camera ISP is Fujitsu Milbeaut MB80645C, typically used in DSCs.

The primary camera ISP is Fujitsu Milbeaut MB80645C, typically used in DSCs.

Thursday, February 07, 2013

SMIC CIS Sales Reach 5% to 7.5% of Revenue in 2012

Seeking Alpha published SMIC Q4 2012 earnings call transcript. The company's CEO, TY Chiu, says:

"The first fab [BSI] chip demonstrated good image quality, the complete BSI process technology is targeted to contribute revenue in 2014. This will serve the market for higher resolution of phone cameras and high performance video cameras."

"Indeed I think the CIS already is a significant portion of our revenue. The CIS, so after – at last year, it is near to between 5% to 7.5% of our revenue coming from the CIS, okay. And BSI, we expect that definitely will enlarge our access to this market. We believe that by fairly 2014, we should have a reasonable amount of our CIS that comes with BSI technology."

The reported annual revenue is $1.7B, thus CIS sales are about $100M. Image sensors are named as one of the main areas that contributed to 2012 revenue growth.

"The first fab [BSI] chip demonstrated good image quality, the complete BSI process technology is targeted to contribute revenue in 2014. This will serve the market for higher resolution of phone cameras and high performance video cameras."

"Indeed I think the CIS already is a significant portion of our revenue. The CIS, so after – at last year, it is near to between 5% to 7.5% of our revenue coming from the CIS, okay. And BSI, we expect that definitely will enlarge our access to this market. We believe that by fairly 2014, we should have a reasonable amount of our CIS that comes with BSI technology."

The reported annual revenue is $1.7B, thus CIS sales are about $100M. Image sensors are named as one of the main areas that contributed to 2012 revenue growth.

IISW 2013 is Full

As EF mentioned in comments, it looks like IISW 2013 is oversubsribed by now.

Wednesday, February 06, 2013

VCM Shortages to Ease

Digitimes reports that the tight supply of VCMs is expected to start easing in Q2 2013, as demand for lens modules has declined recently, while major VCM makers including Shicon, TDK, LG-Innotek and Hysonic are ramping up their production.

Imec Reviews Recent Image Sensor Innovations

Imec article in Solid State Technology reviews the major advances in image sensor technology:

"Interdisciplinarity takes imagers to a higher level" by Els Parton, Piet De Moor, Jonathan Borremans and Andy Lambrechts

"Interdisciplinarity takes imagers to a higher level" by Els Parton, Piet De Moor, Jonathan Borremans and Andy Lambrechts

Tuesday, February 05, 2013

Rumor: HTC to Introduce Layered "Ultrapixel" Sensor

Pocket Lint started the rumor that HTC oncoming M7 smartphone with feature image sensor with "ultrapixels". The HTC M7 sensor will be made up of three 4.3MP sensor layers stacked on top of each other, each sensing a different color. If true, it might represent an interesting cross between Sony stacked sensor and Foveon technologies.

The new smartphone is expected to be officially announced on Feb. 19.

The new smartphone is expected to be officially announced on Feb. 19.

Image Engineering Announces Tunable Light Source

Image Engineering announces a tunable light source, the iQ-LED. The new source is composed of 22 different software controlled LED channels it is possible to generate the spectrum of standard light sources such as D50, D55, D65, A, B, C etc. with a very high accuracy:

|

| The 22 channels of the iQ-LED source |

|

| The user interface of the control software in prototype stage with light set D65 |

Monday, February 04, 2013

Panasonic Develops Micro Color Splitters

Panasonic announces that it has found the holy grail of color image sensors design - the way to implement the color separation with no losses. Conventional sensors use a Bayer CFA that block 50-70% of the incoming light before it even reaches the sensor. Panasonic has developed unique "micro color splitters" that control the diffraction of light at a microscopic level directing each color to a respective pixel and that way achieved approximately double the color sensitivity in comparison with conventional sensors that use color filters:

This development is described in general terms in the Advance Online Publication version of Nature Photonics issued on February 3, 2013.

The developed technology has the following features:

Device optimization technologies leading to the creation of micro color splitters that control the phase of the light passing through a transparent and highly-refractive plate-like structure and use diffraction to separate colors on a microscopic scale:

Color separation of light in micro color splitters is caused by a difference in refractive index between a) the plate-like high refractive material that is thinner than the wavelength of the light and b) the surrounding material. Controlling the phase of traveling light by optimizing the shape parameters causes diffraction phenomena that are seen only on a microscopic scale and which cause color separation. Micro color splitters are fabricated using a conventional semiconductor manufacturing process. Fine-tuning their shapes causes the efficient separation of certain colors and their complementary colors, or the splitting of white light into blue, green, and red like a prism, with almost no loss of light.

Layout technologies and unique algorithms that enable highly sensitive and precise color reproduction by overlapping diffracted light on detectors separated by micro color splitters and processing the detected signals:

Since light separated by micro color splitters falls on the detectors in an overlapping manner, a new pixel layout and design algorithm are needed. The layout scheme is combined and optimized using an arithmetic processing technique designed specifically for mixed color signals. The result is highly sensitive and precise color reproduction. For example, if the structure separates light into a certain color and its complementary color, color pixels of white + red, white - red, white + blue, and white - blue are obtained and, using the arithmetic processing technique, are translated into normal color images without any loss of resolution.

This development is described in general terms in the Advance Online Publication version of Nature Photonics issued on February 3, 2013.

The developed technology has the following features:

- Using color alignment, which can use light more efficiently, instead of color filters, vivid color photographs can be taken at half the light levels needed by conventional sensors.

- Micro color splitters can simply replace the color filters in conventional image sensors, and are not dependent on the type of image sensor (CCD or CMOS) underneath.

- Micro color splitters can be fabricated using inorganic materials and existing semiconductor fabrication processes.

- A unique method of analysis and design based on wave optics that permits fast and precise computation of wave-optics phenomena.

- Device optimization technologies for creating micro color splitters that control the phase of the light passing through a transparent and highly-refractive plate-like structure to separate colors at a microscopic scale using diffraction.

- Layout technologies and unique algorithms that allow highly sensitive and precise color reproduction by combining the light that falls on detectors separated by the micro color splitters and processing the detected signals.

Device optimization technologies leading to the creation of micro color splitters that control the phase of the light passing through a transparent and highly-refractive plate-like structure and use diffraction to separate colors on a microscopic scale:

Color separation of light in micro color splitters is caused by a difference in refractive index between a) the plate-like high refractive material that is thinner than the wavelength of the light and b) the surrounding material. Controlling the phase of traveling light by optimizing the shape parameters causes diffraction phenomena that are seen only on a microscopic scale and which cause color separation. Micro color splitters are fabricated using a conventional semiconductor manufacturing process. Fine-tuning their shapes causes the efficient separation of certain colors and their complementary colors, or the splitting of white light into blue, green, and red like a prism, with almost no loss of light.

Layout technologies and unique algorithms that enable highly sensitive and precise color reproduction by overlapping diffracted light on detectors separated by micro color splitters and processing the detected signals: